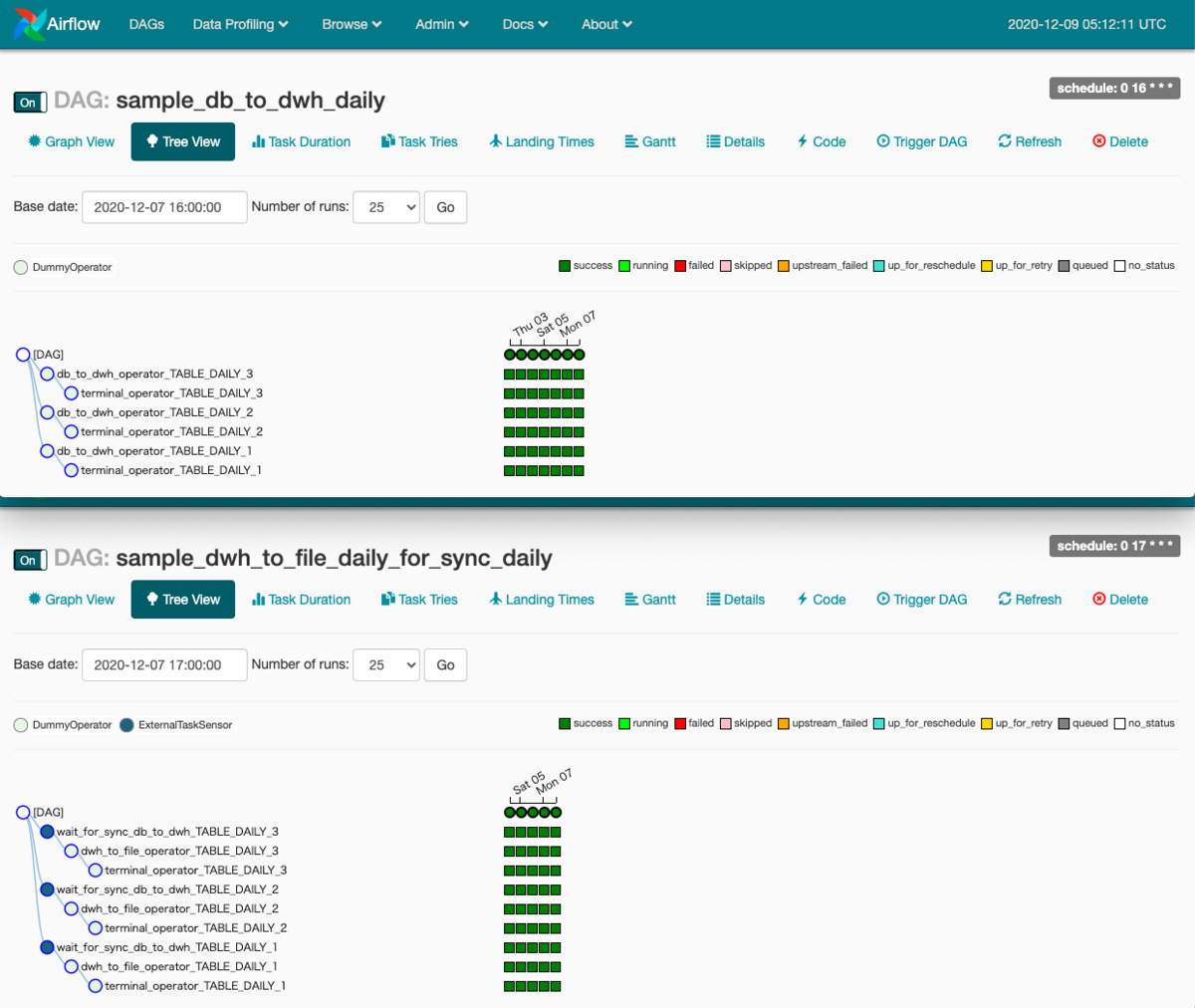

I’ve used the “airflow_db” database’s – “dag_run” table to get all the individual successful DAG run jobs in the past 30 days. load_data_from_airflow_to_bq – (Monthly dag Run).In this case, I have set it to run on an hourly basis.įollowing are the two DAGs created for overcoming the above problem: This entire process is now encapsulated within a new DAG that monitors the environment in which it is deployed and executed in a regular manner. There’s a caveat here: the average run time for each DAG was previously calculated and stored in another manually created table. If the current run time is more than the average run time by a considerable margin, then we have a DAG of interest which needs to be investigated. The basic idea is to execute a process in the background from these tables and figure out the DAGs in the execution state.įrom such DAGs in execution, I compared the current run time to the average run time. Therefore, I took the advantage of the above-mentioned “airflow_db” database that can help figure out DAGs that are running longer than their usual run time. The above output shows all the column information for DAG - airflow_monitoring The snapshots give a view of all the DAGs that are currently running Out of the tables, the table which is relevant is – In the following example, the database is – airflow_db. In the later versions of Airflow, this Data Profiler is disabled. You can also query these tables using SQL statements available in the Data Profiler option in Airflow version 1.10.15+ under Ad-Hoc Query. You can solve this issue by proactive monitoring.Īirflow jobs are tracked by underlying relational tables that maintain the log for all the DAGs, the tasks within each DAG, running statistics, running status, etc. This can even lead to a business SLA breach. The reason is that there is always a chance of missing that one nasty job out of the hundreds of jobs running in a live environment. This is particularly important for the production team supporting day-to-day operations. One of the common challenges with any job scheduler is to identify those workflows that run longer than their average run time. The underlying code is written in Python which relies on calling libraries for scheduling tasks periodically.

With Airflow, one can create Directed Acyclic Graphs, commonly called DAGs, which are used for representing these workflows graphically. Instead, it updates max_tries to 0 and sets the current task instance state to None, which causes the task to re-run.Ĭlick on the failed task in the Tree or Graph views and then click on Clear.Apache Airflow is relatively a new, yet immensely popular tool, for scheduling workflows and automating tasks in a production environment.

Clearing a task instance doesn’t delete the task instance record. The errors after going through the logs, you can re-run the tasks by clearing them for the Some of the tasks can fail during the scheduled run. This behavior is great for atomic datasets that can easily be split into periods. If the dag.catchup value had been True instead, the scheduler would have created a DAG Runįor each completed interval between -02 (but not yet one for ,Īs that interval hasn’t completed) and the scheduler will execute them sequentially.Ĭatchup is also triggered when you turn off a DAG for a specified period and then re-enable it. Just after midnight on the morning of with a data interval between With a data between -02, and the next one will be created at 6 AM, (or from the command line), a single DAG Run will be created In the example above, if the DAG is picked up by the scheduler daemon on datetime ( 2015, 12, 1, tz = "UTC" ), description = "A simple tutorial DAG", schedule =, catchup = False, ) """ Code that goes along with the Airflow tutorial located at: """ from import DAG from import BashOperator import datetime import pendulum dag = DAG ( "tutorial", default_args =, start_date = pendulum. When tasks in the DAG will start running. The same logical date, it marks the start of the DAG’s first data interval, not Similarly, since the start_date argument for the DAG and its tasks points to Of a DAG run, for example, denotes the start of the data interval, not when the “logical date” (also called execution_date in Airflow versions prior to 2.2) after 00:00:00.Īll dates in Airflow are tied to the data interval concept in some way. Other words, a run covering the data period of generally does not To ensure the run is able to collect all the data within the time period. Its data interval would start each day at midnight (00:00) and end at midnightĪ DAG run is usually scheduled after its associated data interval has ended, For a DAG scheduled with for example, each of Each DAG run in Airflow has an assigned “data interval” that represents the time

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed